When I applied for the IT-Trainee program at Commerzbank, I wanted to show something concrete — not just a CV, but working software. So instead of submitting a static page, I built leonalexisoet.com as part of the application. The idea was simple: let the code speak for itself.

A quick note to avoid confusion: this post describes leonalexisoet.com, the portfolio site I built for the application. You are reading it on blog.leonalexisoet.com, which is a separate site for my writing. Two different projects, two different domains.

Starting from scratch

The tech stack is React 19, TypeScript, and TailwindCSS v4, bundled with Vite. These are current tools used in modern product teams, not safe legacy choices. The layout is a bento grid of live widget cards (a chess board, a blog feed, a booking calendar, and an AI chat assistant) that adapts cleanly from mobile to desktop.

Every widget has consistent loading states, error handling, and dark mode support. These were not afterthoughts. They were built as shared infrastructure before any individual widget was wired up, so the experience is coherent across the whole page.

Building with Claude Code and the GSD framework

This project was built using Claude Code, paired with a structured workflow called the GSD framework (Get Shit Done).

Claude Code is Anthropic’s command-line AI coding assistant. Unlike a chat window where you paste code back and forth, it runs directly inside the project: it reads existing files to understand context, edits across multiple files at once, runs the build and tests, inspects the git history, and commits the work itself. It works the way a developer works, not the way a chat bot works.

The problem with using AI to write code is well known. Models produce plausible code that does not actually do what was asked. The output looks right, gets accepted, and the bug only surfaces later. A second failure mode is scope drift: ask for one thing, receive five unrequested “improvements”, and end up with a diff that is impossible to review. Both failures share a root cause — there is no contract upfront about what “done” means, so anything that compiles can be called done.

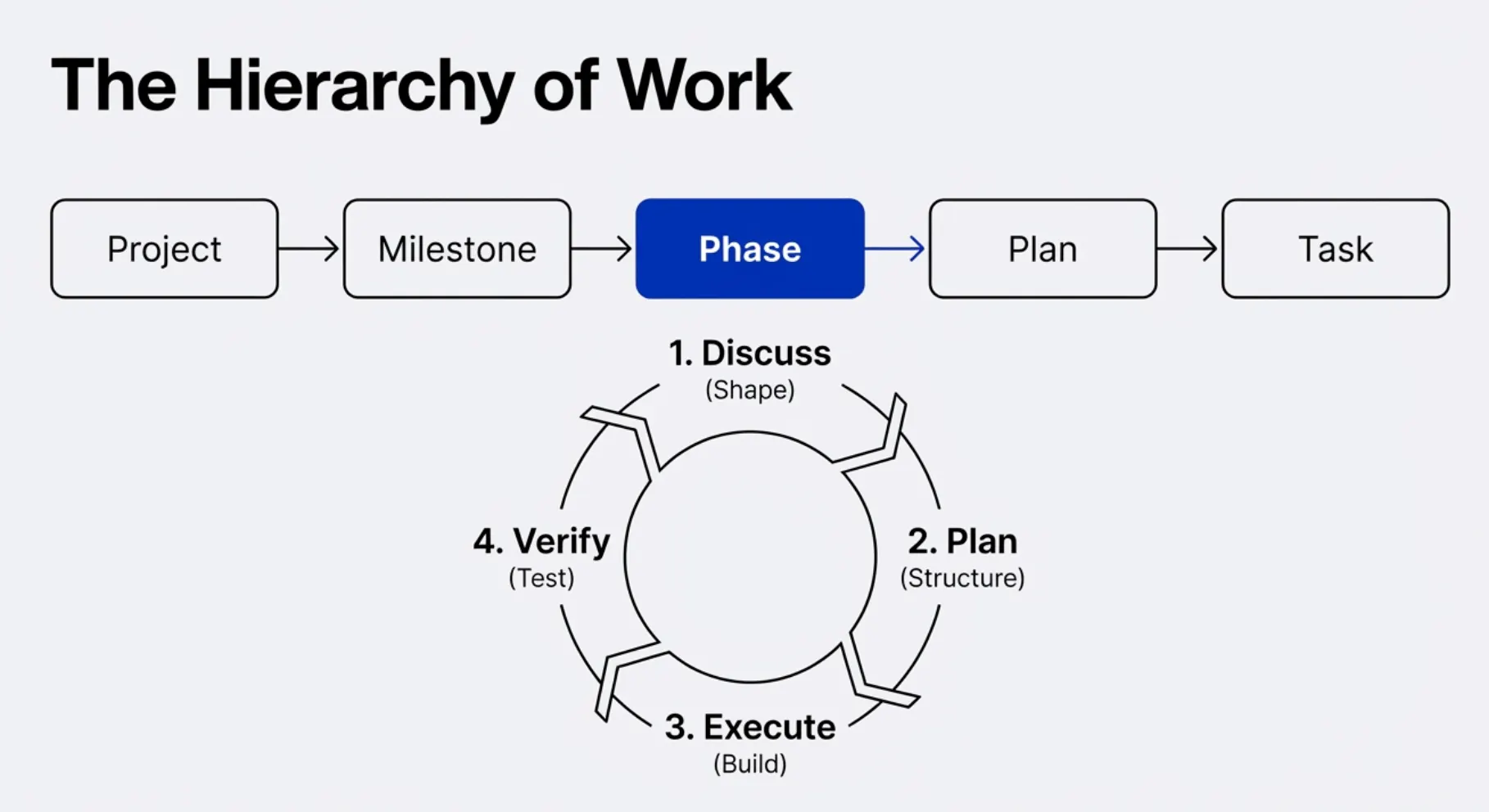

GSD is a phase-based methodology that fixes this. Before any code is written, each phase defines explicit success criteria — not “the feature should work” but specific, testable statements of what must be true when the phase is finished. Either they pass or they do not.

Every phase follows the same structure: discuss the goal, plan the implementation, execute the work, then verify against the criteria. Research, decisions, plans, and verification results are all written down and committed alongside the code, so the reasoning behind every change stays visible in the repository.

This forces clarity upfront and makes it impossible to vaguely claim something is finished. Over eleven phases and 252 commits, this structure is what kept the project coherent and on track.

Browser automation with Playwright MCP

One of the more forward-looking parts of the setup was using the Playwright MCP server alongside Claude Code. MCP (Model Context Protocol) is a standard that lets AI tools connect to external services, in this case a real browser.

This meant Claude Code could open the site, navigate it, take screenshots, and verify that things looked correct visually, without me checking every state by hand. For QA, it opened the site across multiple screen sizes, switched between light and dark mode, and confirmed nothing was broken. That kind of automated visual verification would have taken hours manually.

An AI chat assistant

The most complex feature is a floating chat widget where visitors can ask natural-language questions about me. Click the bubble, ask something, get an answer about my background, skills, or projects.

The backend calls the OpenAI API with a curated knowledge base injected as context. This is a detailed document that tells the model exactly what to say and how to frame things. Responses stream in word by word, so the experience feels instant. The API key never leaves the server. Cost controls are enforced server-side. It works the way a production feature should.

Live chess

The chess widget connects to the Lichess API and shows my games in real time. When I am playing, the board updates move by move as the game happens. When I am not, it shows my last completed game with controls to step through every move. The switch between these two modes is automatic.

Getting real-time data from an external API into a browser without hitting security restrictions required building a small server-side proxy. A practical problem with a clean solution, and the kind of thing that only comes up when you are actually shipping something.

Blog feed

The blog widget pulls my latest posts live from an RSS feed and refreshes automatically. The challenge was getting data from a different domain into the browser cleanly. A small server-side function handles the fetch and transformation, and a lightweight data-fetching library takes care of caching, polling, and loading states on the client side.

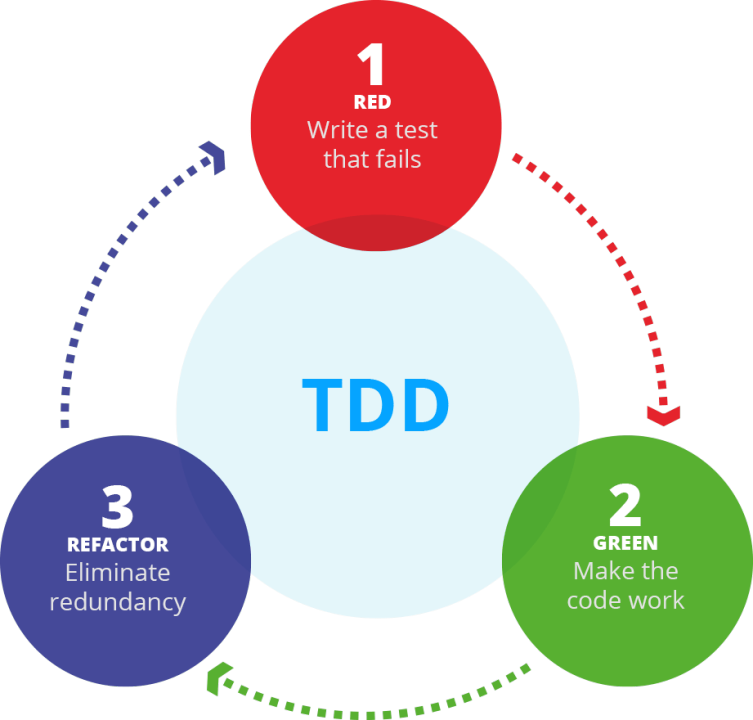

Test-driven development

Every significant piece of logic was built test-first. Write the tests, watch them fail, then write the code to make them pass. The GSD framework treats this as a formal phase type, not optional and not a cleanup step at the end.

This approach paid off repeatedly. When the streaming pipeline needed to be refactored, or the chess game logic was rewritten, the test suite caught regressions immediately. Tests were not documentation or compliance. They were what made it safe to move fast.

End-to-end tests ran against a real browser using Playwright, verifying that the site looked and behaved correctly across viewport sizes and themes.

Phased planning

The project ran for three weeks across eleven defined phases. Each phase had research, a plan with verifiable outcomes, execution, and a verification check. Features were sequenced by dependency rather than convenience: shared infrastructure before individual features, fallback modes before live ones, backend verification before any frontend was written.

This is how complex software stays manageable. Not by doing everything at once, but by knowing what depends on what and building in the right order.

Deployment

The site is deployed on Vercel, connected to GitHub. Every push to the main branch triggers an automatic build and deploy. The server-side functions for the chess proxy, blog feed, and chat API are deployed alongside the frontend from the same repository with no extra configuration.

What this project shows

This site is a small project. But how you build something reveals how you think. This one was planned before it was coded, tested before it was shipped, and structured so that each piece could be verified independently.

It was built specifically to accompany my application for the IT-Trainee program at Commerzbank — not as decoration, but as a concrete answer to the question: how does this person actually work?